當(dāng)前位置: Language Tips> 雙語(yǔ)新聞

New AI can guess whether you're gay or straight from a photograph

分享到

據(jù)國(guó)外媒體報(bào)道,斯坦福大學(xué)研究人員開(kāi)發(fā)出的一個(gè)算法能夠根據(jù)一張面部照片判斷你的性取向。通過(guò)對(duì)3.5萬(wàn)多張面部照片的分析,研究人員基于所得數(shù)據(jù)創(chuàng)建了一套算法模型。研究表明,該算法判斷男性性向的準(zhǔn)確率高達(dá)81%,而對(duì)女性的性向判斷的準(zhǔn)確率為74%。

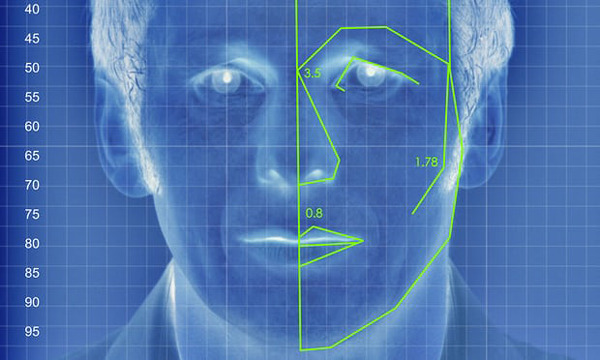

Artificial intelligence can accurately guess whether people are gay or straight based on photos of their faces, according to new research suggesting that machines can have significantly better "gaydar" than humans.

一項(xiàng)新研究顯示,人工智能可以通過(guò)人臉照片精確識(shí)別出這個(gè)人是直男還是同性戀,該研究認(rèn)為,機(jī)器的“gay達(dá)”(同志雷達(dá))比人類(lèi)準(zhǔn)確得多。

The study from Stanford University – which found that a computer algorithm could correctly distinguish between gay and straight men 81% of the time, and 74% for women – has raised questions about the biological origins of sexual orientation, the ethics of facial-detection technology and the potential for this kind of software to violate people's privacy or be abused for anti-LGBT purposes.

這項(xiàng)斯坦福大學(xué)的研究發(fā)現(xiàn),計(jì)算機(jī)算法能正確區(qū)分直男與同性戀,準(zhǔn)確率高達(dá)81%,對(duì)女性性取向判別的準(zhǔn)確率為74%。這一研究引發(fā)了人們對(duì)性向的生物學(xué)起源、人臉識(shí)別科技的道德倫理以及此類(lèi)軟件對(duì)個(gè)人隱私可能造成的侵犯,或被濫用于反同性戀、雙性戀及變性人群體等問(wèn)題的爭(zhēng)議。

The machine intelligence tested in the research, which was published in the Journal of Personality and Social Psychology and first reported in the Economist, was based on a sample of more than 35,000 facial images that men and women publicly posted on a US dating website. The researchers, Michal Kosinski and Yilun Wang, extracted features from the images using "deep neural networks", meaning a sophisticated mathematical system that learns to analyze visuals based on a large dataset.

這項(xiàng)研究率先被《經(jīng)濟(jì)學(xué)人》報(bào)道,并發(fā)表在《人格與社會(huì)心理學(xué)》雜志上。這種人工智能分析了美國(guó)某交友網(wǎng)站上公開(kāi)發(fā)布的35000多張男女面部圖像樣本。研究人員邁克?科辛斯基和Yilun Wang利用“深層神經(jīng)網(wǎng)絡(luò)”從圖像中提取相關(guān)性別特征,這是一個(gè)從大量數(shù)據(jù)中學(xué)會(huì)視覺(jué)分析的復(fù)雜數(shù)學(xué)系統(tǒng)。

The research found that gay men and women tended to have "gender-atypical" features, expressions and "grooming styles", essentially meaning gay men appeared more feminine and vice versa. The data also identified certain trends, including that gay men had narrower jaws, longer noses and larger foreheads than straight men, and that gay women had larger jaws and smaller foreheads compared to straight women.

研究發(fā)現(xiàn),同性戀男女往往具有“非典型性別”特征、表情和“打扮風(fēng)格”,也就是說(shuō)男同性戀一般趨向于女性化,而女同反之。研究數(shù)據(jù)還發(fā)現(xiàn)了一些其他趨勢(shì),如男同性戀的下巴比直男更窄,鼻子更長(zhǎng),前額更寬。而同性戀女性相比直女下巴更寬,前額更窄。

Human judges performed much worse than the algorithm, accurately identifying orientation only 61% of the time for men and 54% for women. When the software reviewed five images per person, it was even more successful – 91% of the time with men and 83% with women. Broadly, that means "faces contain much more information about sexual orientation than can be perceived and interpreted by the human brain", the authors wrote.

人類(lèi)在這方面的的判斷表現(xiàn)遜于機(jī)器算法,其判斷男性性向的準(zhǔn)確率僅為61%,女性的為54%。當(dāng)人工智能軟件能夠?yàn)g覽5張測(cè)試對(duì)象的照片時(shí),準(zhǔn)確率則更高:對(duì)男性性向判斷的準(zhǔn)確率為91%,對(duì)女性的為83%。研究人員在論文中寫(xiě)道,從廣義上講,這意味著“人類(lèi)面孔包含的性取向信息比人類(lèi)大腦可以感知和解讀的更多”。

The paper suggested that the findings provide "strong support" for the theory that sexual orientation stems from exposure to certain hormones before birth, meaning people are born gay and being queer is not a choice. The machine's lower success rate for women also could support the notion that female sexual orientation is more fluid.

文中指出,有理論認(rèn)為胎兒出生前接觸到的某些激素決定了其性向,也就是說(shuō)同性戀是天生的,而不是后天的選擇,該研究結(jié)果對(duì)此提供了“有力支持”。而機(jī)器對(duì)于女性性向識(shí)別成功率較低的現(xiàn)象,則印證了女性性取向更加易變的說(shuō)法。

While the findings have clear limits when it comes to gender and sexuality – people of color were not included in the study, and there was no consideration of transgender or bisexual people – the implications for artificial intelligence (AI) are vast and alarming. With billions of facial images of people stored on social media sites and in government databases, the researchers suggested that public data could be used to detect people's sexual orientation without their consent.

雖然研究結(jié)果對(duì)性別和性征有明顯的局限,有色人種沒(méi)有被納入研究,而變性者和雙性戀也沒(méi)有納入考量,但這已經(jīng)顯示了人工智能的巨大影響,并給人類(lèi)敲響了警鐘。社交網(wǎng)絡(luò)和政府?dāng)?shù)據(jù)庫(kù)中存儲(chǔ)了數(shù)十億人像圖片,研究人員認(rèn)為這些公共數(shù)據(jù)都可能在未經(jīng)本人同意的情況下,被人用來(lái)進(jìn)行性取向識(shí)別。

It's easy to imagine spouses using the technology on partners they suspect are closeted, or teenagers using the algorithm on themselves or their peers. More frighteningly, governments that continue to prosecute LGBT people could hypothetically use the technology to out and target populations. That means building this kind of software and publicizing it is itself controversial given concerns that it could encourage harmful applications.

可想而知,夫妻可能會(huì)用這項(xiàng)技術(shù)測(cè)試被他們懷疑是深柜的另一半,青少年也可以使用這種算法來(lái)識(shí)別自己和同齡人。更加可怕的是,一些對(duì)LGBT群體進(jìn)行法律制裁的國(guó)家可能會(huì)利用該技術(shù)讓人出柜。這說(shuō)明開(kāi)發(fā)并公開(kāi)此類(lèi)軟件的行為本身存在爭(zhēng)議,因?yàn)檫@可能會(huì)導(dǎo)致有危害性的應(yīng)用軟件出現(xiàn)。

But the authors argued that the technology already exists, and its capabilities are important to expose so that governments and companies can proactively consider privacy risks and the need for safeguards and regulations.

但該論文的作者表示,這些技術(shù)早已存在,曝光其功能很關(guān)鍵,因?yàn)檫@樣政府和公司才能主動(dòng)關(guān)注其隱私風(fēng)險(xiǎn),以及進(jìn)行管理防范的必要性。

"It's certainly unsettling. Like any new tool, if it gets into the wrong hands, it can be used for ill purposes," said Nick Rule, an associate professor of psychology at the University of Toronto, who has published research on the science of gaydar. "If you can start profiling people based on their appearance, then identifying them and doing horrible things to them, that's really bad."

多倫多大學(xué)心理學(xué)教授尼克?魯爾曾發(fā)表過(guò)關(guān)于“同志雷達(dá)”的研究。他表示:“這當(dāng)然是令人不安的,它就像任何新工具一樣,如果心術(shù)不正的人得到它,就會(huì)用來(lái)做壞事。如果我們開(kāi)始以外表來(lái)分析一個(gè)人,由此得出判斷,并對(duì)他們做出恐怖的事情,那就太糟糕了。”

英文來(lái)源:衛(wèi)報(bào)

翻譯&編輯:董靜

審校:yaning

上一篇 : 蘋(píng)果新品遭大規(guī)模提前泄密

下一篇 : 有錢(qián)任性!硅谷高管輸入年輕血液抗衰老

分享到

關(guān)注和訂閱

口語(yǔ)

關(guān)于我們 | 聯(lián)系方式 | 招聘信息

電話:8610-84883645

傳真:8610-84883500

Email: languagetips@chinadaily.com.cn